AI homework ethics matters because AI can either sharpen a student’s thinking or quietly replace it. AI homework ethics is about using tools like ChatGPT to support learning—brainstorming, organizing, checking understanding—without crossing the line into having AI do the work for you. When families, teachers, and students agree on clear expectations, AI becomes a tutor, not a shortcut.

Ethical AI homework use means AI can help you learn, but it cannot think or write for you. Using AI to generate ideas, clarify confusing concepts, or check grammar is usually acceptable if your teacher allows it, but submitting AI-written answers as your own is cheating and violates academic integrity. Always follow school rules and be transparent about how you used AI.

AI Homework Ethics: Why It Matters

AI tools are now common in schools and homes, and many students already use them for assignments, often without clear guidance. Without shared standards for AI homework ethics, students risk plagiarism, patchy understanding, and inconsistent discipline when policies are unclear.

International and national education groups stress that AI in education must support learning goals, protect privacy, and uphold academic integrity. When students learn to use AI responsibly, they build digital citizenship skills they will need in college, careers, and civic life.

Key Principles of AI Homework Ethics

Principle 1: Learning First, Convenience Second

Ethical AI homework use starts with a simple question: “Does this help me learn, or help me avoid learning?” Educators emphasize that assignments exist to develop skills like writing, problem-solving, and critical thinking, not just to produce correct answers.

Using AI to brainstorm, outline, or get unstuck can support those skills, but letting AI generate full solutions skips the struggle that builds understanding. In AI homework ethics, learning outcomes—not speed or convenience—must be the priority.

Principle 2: Academic Integrity Still Applies

Academic integrity policies treat unauthorized AI-written work the same as copying from a classmate or a website. Passing off AI-generated essays, problem sets, or code as original work is considered plagiarism or cheating by many schools and universities.

Several school and district guidelines now explicitly state that students must either avoid AI for certain assessments or clearly disclose and cite how AI was used. AI homework ethics means the author of the submitted work is still the student, not the algorithm.

Principle 3: Transparency Is Non-Negotiable

Universities and teaching centers urge students to be open about AI use—where allowed—by acknowledging it in their process or citations. Hiding AI assistance breaks trust and makes it difficult for teachers to evaluate genuine skills.

Some guidelines recommend simple statements such as “I used an AI tool to brainstorm ideas and then rewrote the final draft myself,” when permitted. In AI homework ethics, honesty about tools used matters as much as the final answer.

Principle 4: Follow Local Rules and “Traffic Light” Boundaries

Responsible AI frameworks for schools encourage clear “levels” of allowed AI use, such as green (encouraged), amber (conditional), and red (prohibited). For example, brainstorming with AI might be green, grammar checking amber, and having AI write the whole assignment red.

Because policies vary widely between institutions and even teachers, students must check class-specific rules about AI on each syllabus or assignment. AI homework ethics includes respecting those local boundaries, not just personal opinions about what feels fair.

Principle 5: Protect Privacy and Data

Ethical guidelines for AI in education highlight privacy, data security, and avoiding exposure of sensitive student information. Typing full names, IDs, or detailed personal stories into public AI tools can create long-term digital records students cannot control.

AI homework ethics therefore involves cautious information sharing—using anonymized or fictional details when practicing and following school-approved tools when personal data is involved.

Step-by-Step How-To: Using AI for Homework Ethically

Step 1: Check the Rules First

- Read your course syllabus or assignment sheet for AI policies (some explicitly allow, limit, or ban AI).

- If unclear, ask the teacher directly: “Can I use AI to help brainstorm or review my work?”

- If your school or district has an AI policy, treat it as the baseline for AI homework ethics.

This step protects you from accidental policy violations and shows respect for your teacher’s expectations.

Step 2: Use AI as a Tutor, Not a Ghostwriter

- Ask AI to explain concepts in simpler terms, compare examples, or walk through a solution step-by-step instead of just giving the final answer.

- Use AI to suggest practice questions or variations you then solve on your own.

- When teaching yourself with AI, keep a separate notebook where you restate ideas in your own words to confirm understanding.

Research-informed teaching guidance notes that using AI for explanation and practice can support critical thinking if students still do their own mental work.

Step 3: Keep Drafting and Final Writing Your Own

- If AI is allowed, use it to brainstorm topics, generate outlines, or collect perspectives, then close the tool while you write your first full draft yourself.

- After drafting, you may (where allowed) ask AI to flag unclear sentences or grammar issues, then choose what to revise—not accept every suggestion blindly.

- Avoid pasting AI paragraphs directly into your assignment; instead, paraphrase, add original examples, and insert citations for any information used.

AI homework ethics views AI-generated text as a starting point, not a final product.

Step 4: Be Honest About AI Assistance

- When policies permit AI, include a brief note or reflection explaining how you used it (for brainstorming, explanation, or proofreading).

- If AI provided specific facts or structured arguments, verify them with reliable sources and cite those sources, not the AI tool itself.

- Never claim “no AI used” on an honor statement if you did use AI; misrepresentation can carry serious academic penalties.

Transparency aligns your work with academic integrity standards and emerging institutional guidelines on AI.

Step 5: Guard Privacy and Sensitive Topics

- Avoid entering full names, locations, or detailed personal histories into public AI systems.

- For reflective or sensitive assignments (family stories, trauma, identity), rely more on human support (teachers, counselors, family) than AI tools.

- Prefer school-approved or locally hosted AI tools when personal or school-related data is involved.

These practices align with global recommendations that stress data protection as a core part of ethical AI in education.

Step 6: Watch for Bias and Errors

- Use AI responses as hypotheses to check, not truths to copy; fact-check using trusted sources such as textbooks or academic databases.

- If AI gives a questionable or biased answer, analyze why it might be wrong or incomplete; this builds critical digital literacy.

- When possible, discuss such cases in class as examples of why human judgment must remain central.

Educational resources emphasize that students should learn to question AI outputs, not simply trust them.

Common Mistakes (or Myths) About AI Homework Ethics

Myth 1: “If AI is free to use, it’s fair to use for anything.”

Many students assume that if access is open, all uses are allowed. In reality, school policies often prohibit using AI to generate full assignments, even if the tool itself is widely available.

Myth 2: “If I edit the AI text a little, it’s my work.”

Light editing over AI-generated text generally does not make it original and can still violate academic integrity rules. Institutions increasingly treat undisclosed AI-generated drafts as a form of ghostwriting.

Myth 3: “Teachers won’t know if I used AI.”

While detection tools are imperfect, educators are developing new assessment designs and policies that make AI misuse easier to spot and less useful. Sudden changes in writing style, lack of process work, or inability to explain answers can all raise red flags.

Myth 4: “Ethical AI use is just about avoiding cheating.”

Ethical use also includes fairness, access, privacy, and avoiding over-reliance that weakens genuine skills. Schools are being urged to teach AI literacy so students understand broader social and ethical impacts, not just discipline rules.

AI Homework Ethics Quick Reference Table

| AI Use Case | Likely Ethical Status (Typical Policies) | Notes for Students |

|---|---|---|

| Brainstorming ideas or topics | Often allowed (green) when disclosed and permitted by the teacher | Use as a springboard, then create your own outline and argument. |

| Explaining a concept you do not understand | Often allowed (green) as study support | Treat AI like a tutor; still verify with class materials. |

| Generating a full essay or problem set answers | Commonly prohibited (red) and considered cheating | Violates academic integrity; your work must be your own. |

| Grammar and clarity suggestions on your draft | Often conditional (amber) depending on course rules | Ask if this is allowed; keep your voice and ideas central. |

| Translating entire assignments into another language | Often conditional or discouraged (amber/red) | May hide language-learning goals or create unfair advantages. |

| Drafting code solutions for graded assignments | Frequently restricted or banned (red) in CS courses | Use AI more for debugging explanations than full solutions. |

| Checking your understanding before an exam | Usually acceptable as extra practice (green) | Do not ask for exact test questions; focus on concepts. |

Policies vary; always follow your institution’s specific AI guidelines when interpreting this table.

Key Takeaways

- AI homework ethics means using AI to support, not replace, your learning, and always respecting academic integrity rules.

- Passing AI-generated work off as your own is widely considered plagiarism or cheating, even if you “tweak” the text.

- Clear communication with teachers and careful reading of course policies are essential, because AI rules differ between classes and schools.

- Ethical use includes transparency, privacy protection, and critical evaluation of AI outputs, not just avoiding detection.

- When used within guidelines, AI can function as a tutor that builds understanding, confidence, and digital literacy.

AI Homework Ethics FAQ

Q: Is it ever okay to have AI write part of my homework?

A: Some instructors may allow AI to help brainstorm, outline, or suggest revisions, but most prohibit AI from writing graded answers; always follow the specific rules for each class and disclose any permitted AI use.

Q: If I ask AI to help with math or coding, is that cheating?

A: Using AI to walk through similar practice problems or explain steps can be ethical study support, but submitting AI-solved graded problems as your own work is usually considered misconduct.

Q: Do I have to cite AI in my homework?

A: Many institutions recommend acknowledging AI in a note or methodology section when it was used, and citing actual sources for facts or quotations rather than the AI system itself.

Q: How can teachers design homework that encourages ethical AI use?

A: Guidance for educators suggests setting clear AI boundaries, emphasizing process (drafts, reflections), and using authentic tasks that require personal experience or in-class work, which AI cannot easily replicate.

Q: What should parents tell kids about AI and homework?

A: Parenting and school policy resources advise families to talk openly about honesty, skill-building, and school rules, framing AI as a tool to understand work—not a way to avoid doing it.

Conclusion

AI homework ethics is about shaping students who can use powerful tools wisely, not banning technology out of fear. When students, families, and educators agree that real learning, integrity, transparency, and privacy come first, AI becomes an ally in education instead of a shortcut to trouble. With clear guidelines and ongoing conversations, young people can grow into responsible, thoughtful users of AI at school and beyond.

Internal link placement suggestions

- After “Why This Matters” section:

[Internal link: Resource Page – Academic integrity and plagiarism guide] - In “Step-by-Step How-To” (Step 2 or 3):

[Internal link: Related Toolkit/Product – Student AI use checklist or planner] - Next to the Quick Reference Table:

[Internal link: Resource Page – School AI policy or tech safety hub] - In Conclusion near the offer to create a pledge:

[Internal link: Related Toolkit/Product – Printable AI homework ethics pledge/poster]

Image prompts

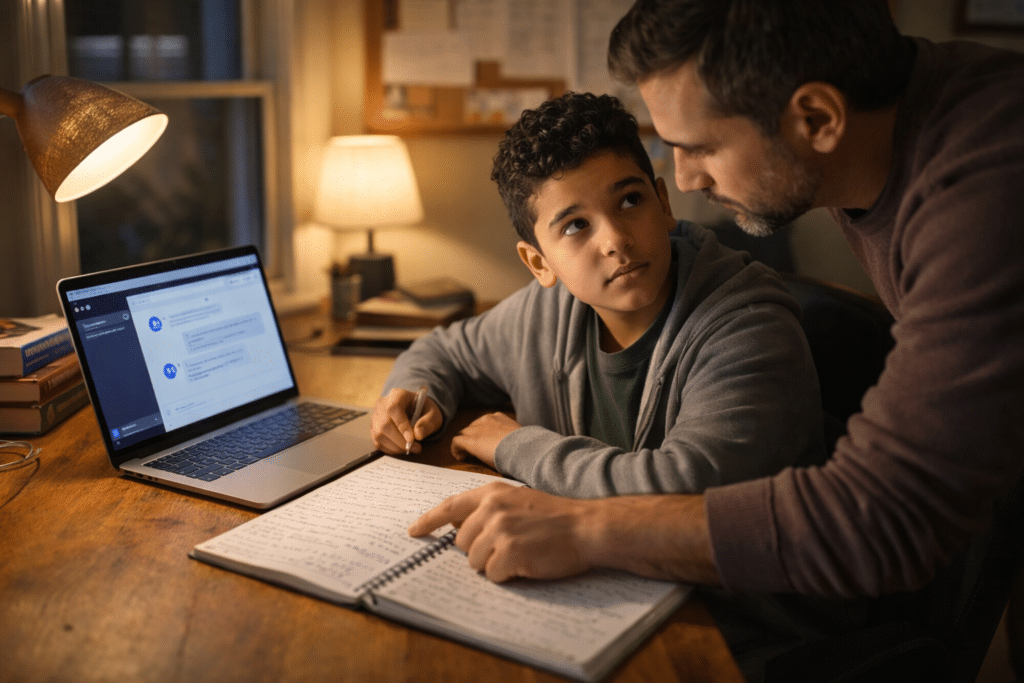

Image Prompt 1 (Hero, 16:9)

Photorealistic scene of a high school student sitting at a desk with a laptop open showing a generic AI chat interface, a notebook full of handwritten notes beside it, parent or teacher leaning over discussing the work, evening home-study setting, warm but serious mood, balanced natural and lamp lighting, 16:9 aspect ratio.

Negative prompt: blurry faces, extra fingers, text, logos, watermarks, distorted hands

Image Prompt 2 (In-article, 4:5)

Photorealistic classroom scene where a diverse group of middle or high school students and a teacher are gathered around a whiteboard labeled with simple icons for “AI,” “Integrity,” and “Learning,” students raising hands in discussion, bright daytime lighting, positive and thoughtful atmosphere, 4:5 aspect ratio.

Negative prompt: blurry faces, extra fingers, text, logos, watermarks, distorted hands

2.6 Sources (selected)

- Summit Learning Charter – “Should Students Use AI for Homework? Pros and Cons.”

- University teaching centers and AI ethics guidance – KU CTE, Harvard Bok Center, Duke University guidelines.

- World Economic Forum – “7 principles on responsible AI use in education.”

- Structural Learning – “Creating an AI Policy for Schools 2025.”

- School and district policy examples on AI acceptable use.

- EU “Ethical guidelines for educators on using artificial intelligence.”

- Classroom AI ethics activities and student literacy resources.

Which audience do you want this AI homework ethics content tailored to most—students, parents, or teachers?